Contributors:

Benedict Leung, Mariana Shimabukuro, Christopher Collins

Providing high-quality, personalized feedback on essays and documents can be exhausting and time-consuming. While teachers love the personal touch of handwritten notes, digital annotation tools often fall short, turning annotations into static marks or focusing too heavily on basic grammar rather than the writer’s actual intent.

Enter AnnotateGPT, it bridges the gap between the natural feel of pen-and-paper grading and the advanced capabilities of AI, transforming simple pen strokes into rich, context-aware feedback.

How It Works

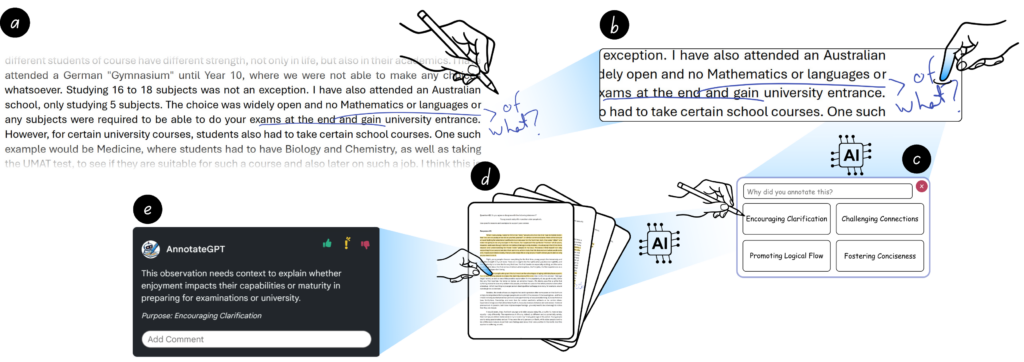

AnnotateGPT turns handwritten marks into a collaborative conversation between the human reviewer and the AI. The process is simple:

- Annotate: The user marks up a document using a digital pen, similar to the standard interface (e.g., circling a paragraph, highlighting a sentence, or scribbling a quick note).

- AI Guesses the Purpose: When the user taps their handwritten mark, the AI analyzes the strokes and the underlying text to guess what the reviewer is trying to correct (e.g., “grammar,” “sentence structure,” or “organization”).

- User Confirms: The user selects the correct purpose, guiding the AI on what to focus on.

- AI Generates Feedback: The AI expands that simple pen stroke into detailed, constructive, and contextually relevant feedback throughout the rest of the document.

Why It Matters

In a study with novice teachers, AnnotateGPT proved to be a game-changer for evaluating student work:

- Saves Time: Reviewers can use quick annotations and let the AI do the heavy lifting of writing out the detailed explanation.

- Higher-Quality Feedback: Instead of just pointing out that a sentence is “awkward,” the AI explains why it’s awkward and suggests actionable fixes.

- Broader Coverage: The AI acts as a second set of eyes, catching bigger-picture issues like logical flow and organization that a hurried reviewer might miss.

Looking Beyond Education

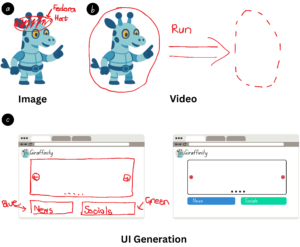

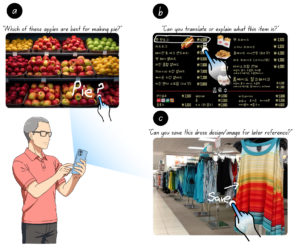

While AnnotateGPT is an incredible tool for education, we envision a future where pen-based annotations become a universal way to interact with AI. Imagine using a digital pen to draw spatial constraints for AI image generation, sketch out UI designs, or even circle an object on a live camera feed to ask an AI questions about the physical world.

AnnotateGPT shows that human-AI collaboration doesn’t just have to happen through text, it can happen right at the tip of your pen.

Explore AnnotateGPT

Read our paper: https://doi.org/10.1145/3772318.3790867

Project Page: https://vialab.github.io/AnnotateGPT/

GitHub: https://github.com/vialab/AnnotateGPT

Video Presentation

Publications

-

Leung, B., Shimabukuro, M., & Collins, C. (2026). AnnotateGPT: Designing Human–AI Collaboration in Pen-Based Document Annotation. In Proceedings of the 2026 CHI Conference on Human Factors in Computing Systems. Association for Computing Machinery.

@inproceedings{10.1145/3772318.3790867,

author = {Leung, Benedict and Shimabukuro, Mariana and Collins, Christopher},

title = {AnnotateGPT: Designing Human–AI Collaboration in Pen-Based Document Annotation},

year = {2026},

isbn = {9798400722783},

publisher = {Association for Computing Machinery},

address = {New York, NY, USA},

url = {https://doi.org/10.1145/3772318.3790867},

doi = {10.1145/3772318.3790867},

abstract = {Providing high-quality feedback on writing is cognitively demanding, requiring reviewers to identify issues, suggest fixes, and ensure consistency. We introduce AnnotateGPT, a system that uses pen-based annotations as an input modality for AI agents to assist with essay feedback. AnnotateGPT enhances feedback by interpreting handwritten annotations and extending them throughout the document. One AI agent classifies the purpose of each annotation, which is confirmed or corrected by the user. A second AI agent uses the confirmed purpose to generate contextually relevant feedback for other parts of the essay. In a study with 12 novice teachers annotating essays, we compared AnnotateGPT with a baseline pen-based tool without AI support. Our findings demonstrate how reviewers used annotations to regulate AI feedback generation, refine AI suggestions, and incorporate AI-generated feedback into their review process. We highlight design implications for AI-augmented feedback systems, including balanced human-AI collaboration and using pen annotations as subtle interaction.},

booktitle = {Proceedings of the 2026 CHI Conference on Human Factors in Computing Systems},

articleno = {811},

numpages = {25},

keywords = {annotation, digital pen, LLM, feedback},

location = {

},

series = {CHI ’26}

}