We are happy to announce that Erik Paluka and Christopher Collins are headed to Halifax, Nova Scotia to present their work on TandemTable at the…

This afternoon Honourable Ed Holder, Minister of State (Science and Technology), made the John R. Evans Leaders Fund national announcement, which included $55,000 in equipment…

Carrie Demmans Epp from the TAGLab at University of Toronto will give a seminar entitled “Adaptive Technologies to Support Language Learning” as part of the…

Vialab is at capacity and not accepting new students for fall 2018. Dr. Christopher Collins, Canada Research Chair in Linguistic Information Visualization, is seeking highly…

The password security research of vialab researchers Christopher Collins and Rafael Veras, and collaborator Julie Thorpe from the Faculty of Business and Information technology has been featured…

This year four of us are attending IEEE VIS in Paris, France to present work by lab members and collaborators. Lab members are presenting on two papers. DimpVis,…

Congratulations to Brittany Kondo who successfully defended her M.Sc. thesis. Her work on object-centric temporal navigation for information visualizations featured two major projects, one on time-varying information…

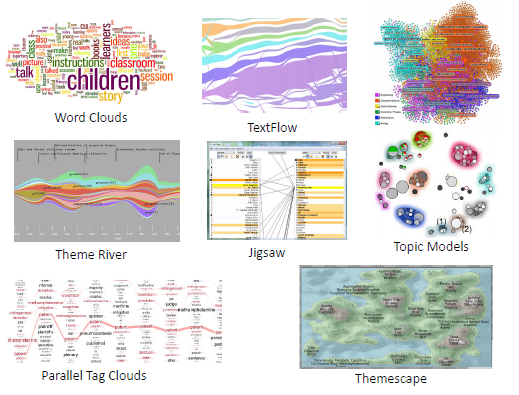

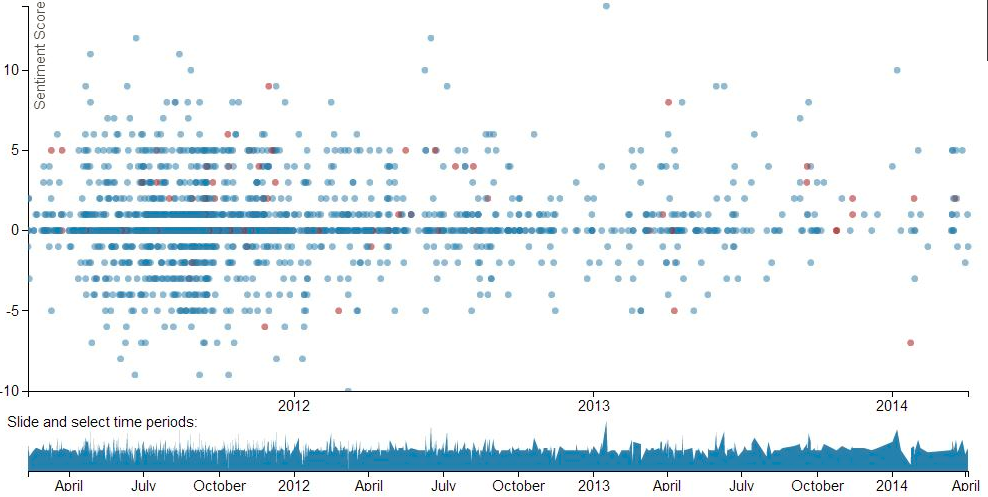

Today I gave a talk on sentiment and semantics in visual text analytics at CANVAS 2014, the Canadian Visual Analytics Summer School. The summer school…

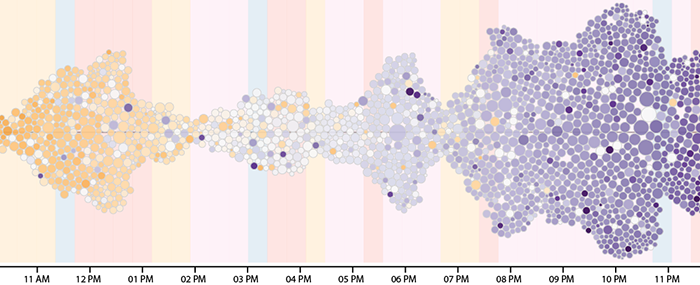

We have just launched a new visualization of emotion as expressed on Twitter. Visitors to SentimentState can use the tool to explore the overall positive/negative score…

The members of the vialab research group had the pleasure to meet Princess Elettra Marconi Giovanelli of Italy when she visited our lab on June…